This article and source code are intended strictly for educational and security research purposes. Misuse for malicious purposes, including unauthorised system access or malware development, is explicitly prohibited. By using this material you agree to our Terms and Conditions. All use is at your own risk.

Ophion is an Intel VT-x Type-2 hypervisor that virtualizes an already running Windows system from a kernel driver. It passes common hypervisor detection tools used by anti-cheats (EAC, BattlEye), anti-viruses, and VM-detection libraries like VMAware and hvdetecc.

This article covers how it works, what detection vectors it defeats and how, and the reasoning behind each design decision.

- Virtualizing a Running System

- VMCS Configuration

- EPT Design

- Stealth Mechanisms

- Interrupt Handling

- Private Host CR3

- VMCALL Gate

- VM-Exit Handler Structure

- XSETBV Validation

- Other VM-Exit Handling

- Stealth Toggles

- Detection Test Results

- Known Limitations and Future Work

- Building and Loading

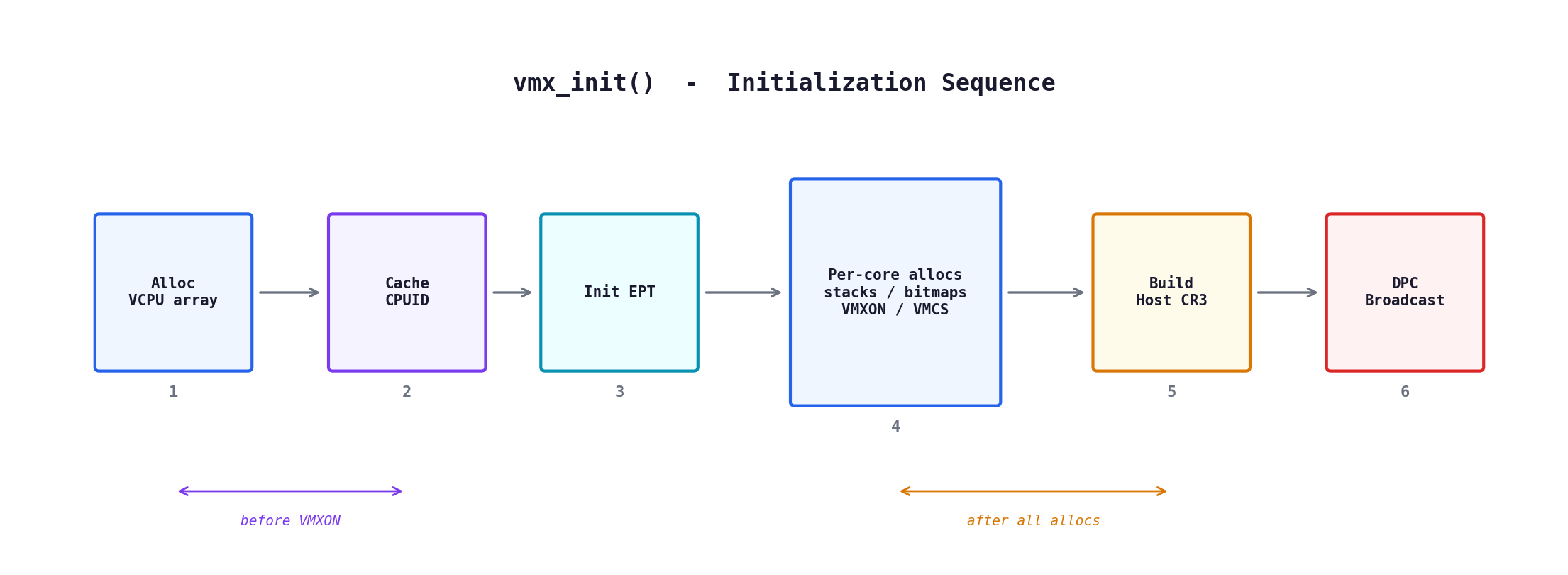

Ophion loads as a standard Windows kernel driver (.sys). On DriverEntry, it:

- Allocates per-VCPU structures for every logical processor

- Caches bare-metal CPUID responses (before VMX changes anything)

- Initializes EPT with identity-mapped 2MB large pages

- Allocates VMM stacks, MSR bitmaps, I/O bitmaps, VMXON/VMCS regions per core

- Deep-copies kernel page tables into private host CR3

- Broadcasts a DPC to every logical processor

The broadcast uses KeGenericCallDpc -- an undocumented kernel API (not in WDK headers, manually prototyped) that fires a DPC on every logical processor and synchronizes completion. Each DPC callback saves the current CPU state (GPRs, segment registers, RFLAGS), enters VMX operation (VMXON), programs the VMCS, and executes VMLAUNCH. The guest resumes at the instruction after the save. The OS keeps running normally -- now under the hypervisor.

On unload, each core issues a VMCALL(VMXOFF) via DPC broadcast. The handler saves guest RIP/RSP/CR3, executes __vmx_off(), then immediately restores guest CR3 and clears CR4.VMXE:

__vmx_off();

__writecr3(guest_cr3);

__writecr4(__readcr4() & ~CR4_VMX_ENABLE_FLAG);

Without the CR3 restore, the system is left running on the private HOST_CR3 -- a stale snapshot that doesn't map any kernel memory allocated after the hypervisor loaded. The first access to post-init memory faults with bugcheck 0xA.

The CPUID cache must be built before VMXON because once you're in VMX operation, CPUID responses can differ. The private host CR3 must be built after all allocations because it's a snapshot of kernel page tables -- if you snapshot first and then allocate VMM stacks, those stacks won't have PTEs in the snapshot, and the first VM-exit will triple fault trying to access an unmapped stack.

Every VMCS field has a reason. Here's what Ophion programs and why.

Pin-based controls: External-interrupt exiting, NMI exiting, and virtual NMIs. External-interrupt exiting and NMI exiting are forced by must-be-1 bits on most Intel CPUs anyway, but they're also required -- without external-interrupt exiting and ACK-on-exit, pending interrupts cause an infinite VM-exit loop that leads to TDR and a black screen. Virtual NMIs are explicitly requested because NMI-window exiting requires them (SDM: "if virtual NMIs is 0, NMI-window exiting must be 0") -- without virtual NMIs, dynamically setting NMI-window exiting to defer an NMI would cause a VM-entry failure.

Primary processor-based controls: TSC offsetting, MSR bitmaps, I/O bitmaps, and activate secondary controls. These are the only exits Ophion explicitly requests. CR3-load/store exiting, INVLPG exiting, HLT exiting, MOV-DR exiting, RDTSC exiting, and others may be forced on by must-be-1 bits depending on the CPU -- Ophion handles all of them if forced. The vmx_adjust_controls function enforces must-be-0 and must-be-1 bits from the capability MSR:

UINT32 vmx_adjust_controls(UINT32 requested, UINT32 capability_msr)

{

MSR msr_val = {0};

msr_val.Flags = __readmsr(capability_msr);

requested &= msr_val.Fields.High; // bit=0 in high -> must be zero

requested |= msr_val.Fields.Low; // bit=1 in low -> must be one

return requested;

}

Secondary processor-based controls: EPT, VPID, RDTSCP pass-through, INVPCID pass-through, XSAVES/XRSTORS pass-through. These are the core features that make the hypervisor functional without breaking guest software that uses these instructions.

VM-exit controls: 64-bit host, save debug controls, ACK interrupt on exit. The ACK-on-exit bit is critical -- it makes the CPU acknowledge external interrupts at the LAPIC and store the vector in VMCS_VMEXIT_INTERRUPTION_INFORMATION. Without it, you can't properly re-inject interrupts.

VM-entry controls: IA-32e mode guest, load debug controls. Loads guest DR7 and IA32_DEBUGCTL on VM-entry so the guest sees correct debug state.

The VMCS guest state requires full segment descriptor programming. Ophion parses the current GDT to extract base, limit, access rights, and selector for all 8 segment registers (ES, CS, SS, DS, FS, GS, LDTR, TR). 64-bit system segments (TSS) use 16-byte descriptors, so the TR base requires reconstruction from both halves. Null selectors are marked unusable.

Host selectors must have RPL and TI bits cleared per the SDM, or VM-entry fails:

__vmx_vmwrite(VMCS_HOST_CS_SELECTOR, asm_get_cs() & 0xF8);

__vmx_vmwrite(VMCS_HOST_SS_SELECTOR, asm_get_ss() & 0xF8);

__vmx_vmwrite(VMCS_HOST_TR_SELECTOR, asm_get_tr() & 0xF8);

// ... same for ES, DS, FS, GS

Other required guest state fields:

__vmx_vmwrite(VMCS_GUEST_VMCS_LINK_POINTER, ~0ULL); // no VMCS shadowing

__vmx_vmwrite(VMCS_GUEST_DEBUGCTL, __readmsr(IA32_DEBUGCTL));

__vmx_vmwrite(VMCS_GUEST_DR7, 0x400); // SDM default (bit 10 always set)

__vmx_vmwrite(VMCS_GUEST_ACTIVITY_STATE, 0); // active

__vmx_vmwrite(VMCS_GUEST_INTERRUPTIBILITY_STATE, 0); // no blocking

__vmx_vmwrite(VMCS_GUEST_PENDING_DEBUG_EXCEPTIONS, 0);

__vmx_vmwrite(VMCS_CTRL_TSC_OFFSET, 0);

__vmx_vmwrite(VMCS_CTRL_PAGEFAULT_ERROR_CODE_MASK, 0); // all #PFs go to guest

__vmx_vmwrite(VMCS_CTRL_PAGEFAULT_ERROR_CODE_MATCH, 0);

SYSENTER fields (CS, EIP, ESP) are programmed for both guest and host from current MSR values. FS/GS bases are loaded from IA32_FS_BASE / IA32_GS_BASE.

The VCPU pointer is placed at the top of the VMM stack so the assembly entry point can retrieve it without calling any Windows API in VMX-root mode:

*(PVIRTUAL_MACHINE_STATE *)((UINT64)vcpu->vmm_stack + VMM_STACK_SIZE - VMM_STACK_VCPU_OFFSET) = vcpu;

__vmx_vmwrite(VMCS_HOST_RSP, (UINT64)vcpu->vmm_stack + VMM_STACK_SIZE - 16);

__vmx_vmwrite(VMCS_HOST_RIP, (UINT64)asm_vmexit_handler);

In VMX-root mode, the current processor is identified via __readmsr(IA32_TSC_AUX) & 0xFFF -- the OS programs IA32_TSC_AUX with the processor number, and reading it is safe in root mode without any Windows API dependency.

CR0 mask = 0: Full pass-through. Guest CR0 writes never cause VM-exits.

CR4 mask = bit 13 (VMXE) when stealth is enabled:

__vmx_vmwrite(VMCS_CTRL_CR4_GUEST_HOST_MASK, CR4_VMX_ENABLE_FLAG); // 0x2000

__vmx_vmwrite(VMCS_CTRL_CR4_READ_SHADOW, __readcr4() & ~CR4_VMX_ENABLE_FLAG);

Guest reads of CR4 come from the read shadow, where VMXE is 0. Guest writes that toggle bit 13 cause a VM-exit. The handler keeps VMXE=1 in the actual VMCS (required for VMX) while the shadow shows VMXE=0.

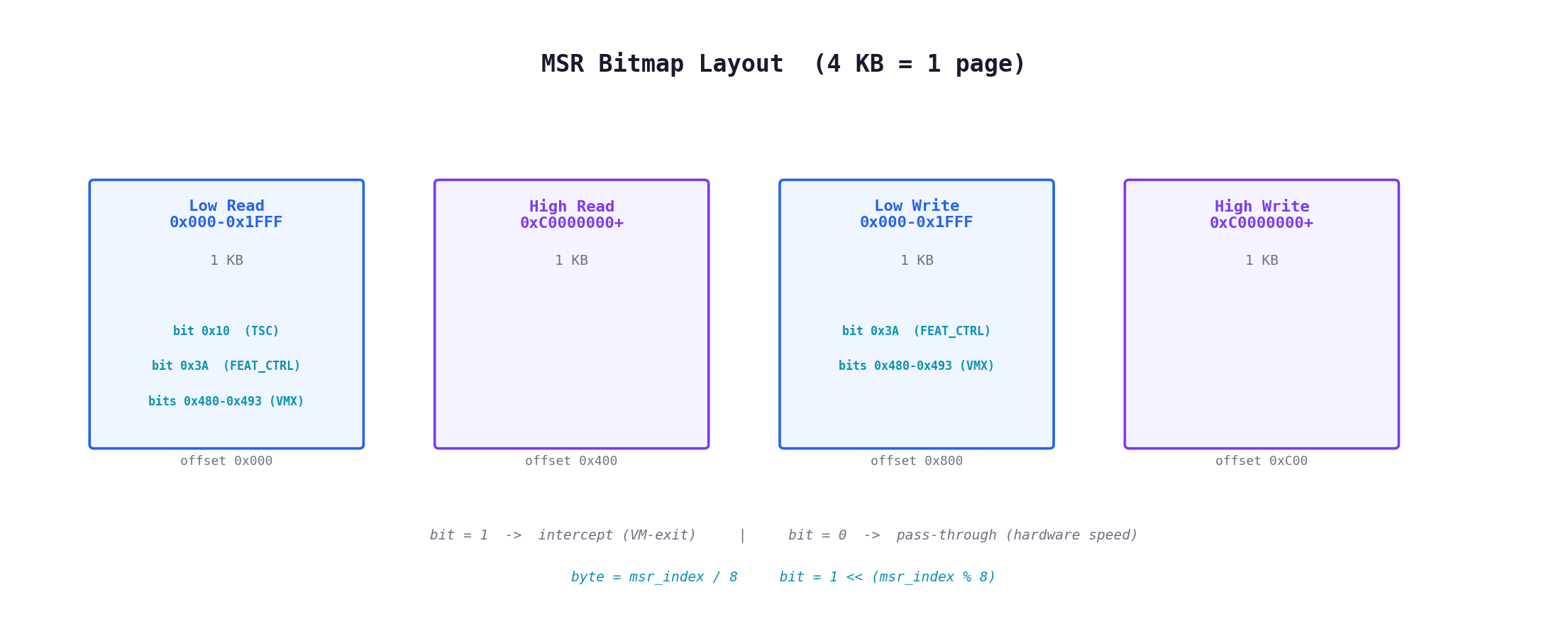

The MSR bitmap is 4 KB (one page), divided into four 1 KB regions: low-range read (0x000-0x1FFF), high-range read (0xC0000000-0xC0001FFF), low-range write, high-range write. A set bit means "intercept this MSR access." Ophion sets up interception at the byte level:

// RDMSR(0x10) -- IA32_TIME_STAMP_COUNTER (read only)

((PUCHAR)vcpu->msr_bitmap_va)[0x10 / 8] |= (UCHAR)(1 << (0x10 % 8));

// IA32_FEATURE_CONTROL (0x3A) -- read + write

((PUCHAR)vcpu->msr_bitmap_va)[0x3A / 8] |= (UCHAR)(1 << (0x3A % 8));

((PUCHAR)vcpu->msr_bitmap_va)[0x800 + 0x3A / 8] |= (UCHAR)(1 << (0x3A % 8));

// VMX capability MSRs (0x480-0x493) -- read + write

for (UINT32 msr_idx = 0x480; msr_idx <= 0x493; msr_idx++)

{

((PUCHAR)vcpu->msr_bitmap_va)[msr_idx / 8] |= (UCHAR)(1 << (msr_idx % 8));

((PUCHAR)vcpu->msr_bitmap_va)[0x800 + msr_idx / 8] |= (UCHAR)(1 << (msr_idx % 8));

}

The write region starts at offset 0x800 from the read region. Everything else in the bitmap is zero -- pass-through at hardware speed.

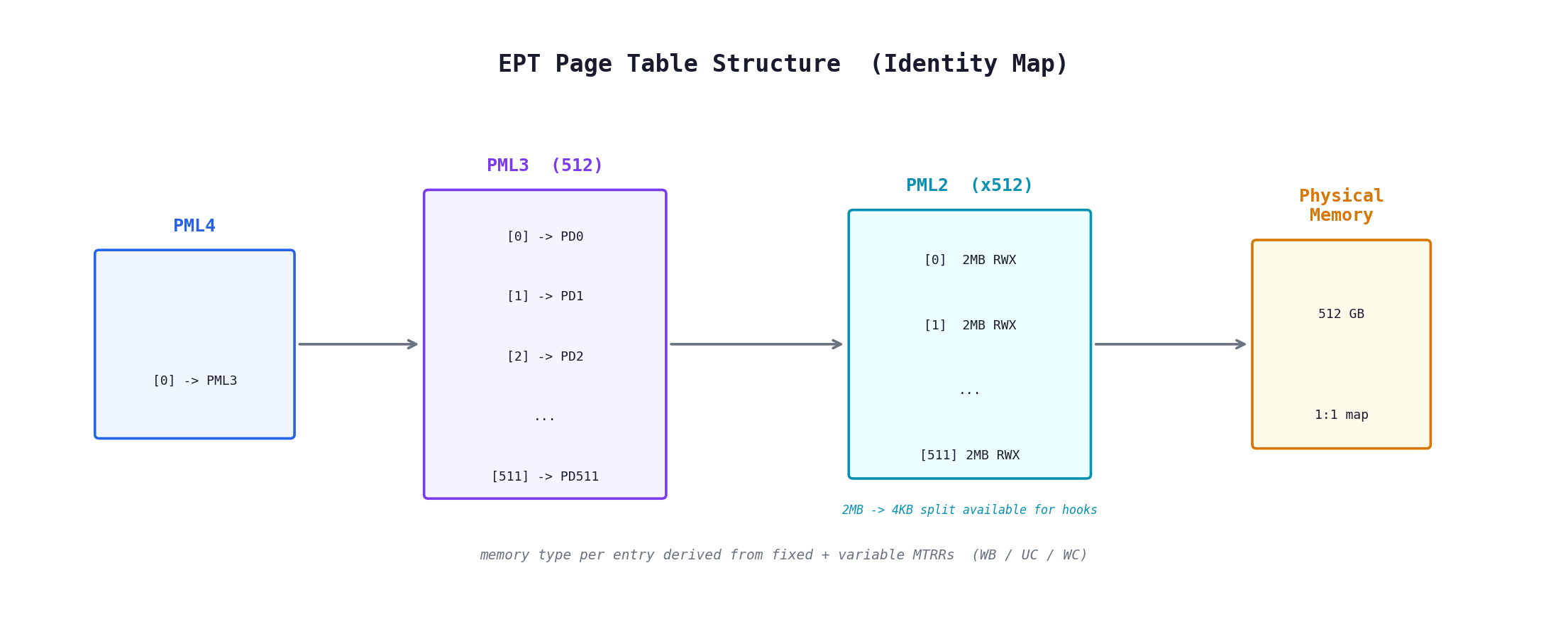

EPT maps all physical memory 1:1 using a 3-level structure:

PML4 (1 entry) -> PML3 (512 entries) -> PML2 (512 * 512 = 262,144 entries)

Each PML2 entry is a 2MB large page with full RWX permissions, covering 512 GB of physical address space. EPT page tables are allocated per-VCPU, so per-core EPT modifications (e.g., hooks) are possible without cross-core synchronization. Large pages are the right default -- they minimize TLB pressure and avoid 4KB-level EPT walks for 99% of memory.

The EPT memory type for each 2MB page is derived from the CPU's MTRR configuration. Before building the EPT, Ophion reads:

- Fixed-range MTRRs: 64KB, 16KB, and 4KB regions covering 0x00000 - 0xFFFFF (legacy memory)

- Variable-range MTRRs: Arbitrary base/mask pairs for the rest of physical memory

Each PML2 entry's memory type matches the MTRR type for its range. If a 2MB page crosses an MTRR boundary (two different types within the same 2MB range), it's flagged for splitting. Getting memory types wrong causes subtle cache coherency bugs -- UC memory mapped as WB can cause stale reads, WB memory mapped as UC tanks performance.

When fine-grained control is needed (e.g., EPT hooks), ept_split_large_page() converts a single 2MB PML2 entry into 512 individual 4KB PML1 entries. Each PML1 entry gets its own MTRR-derived memory type. The infrastructure is in place but no hooks are installed in the current build.

VPID tag 1 is assigned to all VCPUs. Without VPID, every VM-exit/entry would flush the entire TLB. With it, guest and host TLB entries coexist, tagged by VPID.

TLB invalidation is handled explicitly at the points that need it:

- MOV CR3: INVVPID type 3 (single-context retaining globals) if the CPU supports it, otherwise type 1 (single-context). This matches bare-metal MOV CR3 behavior -- global kernel TLB entries are preserved. Falls back to type 2 (all-contexts) if the preferred type fails. Skipped entirely if the PCID no-invalidate bit (bit 63) is set.

- INVLPG: INVVPID type 0 (individual address) if supported, else type 2.

- EPT changes: INVEPT type 1 (single-context) or type 2.

All INVVPID capabilities are queried from IA32_VMX_EPT_VPID_CAP at init and cached.

This is the core of what makes Ophion invisible. Each mechanism targets specific detection vectors.

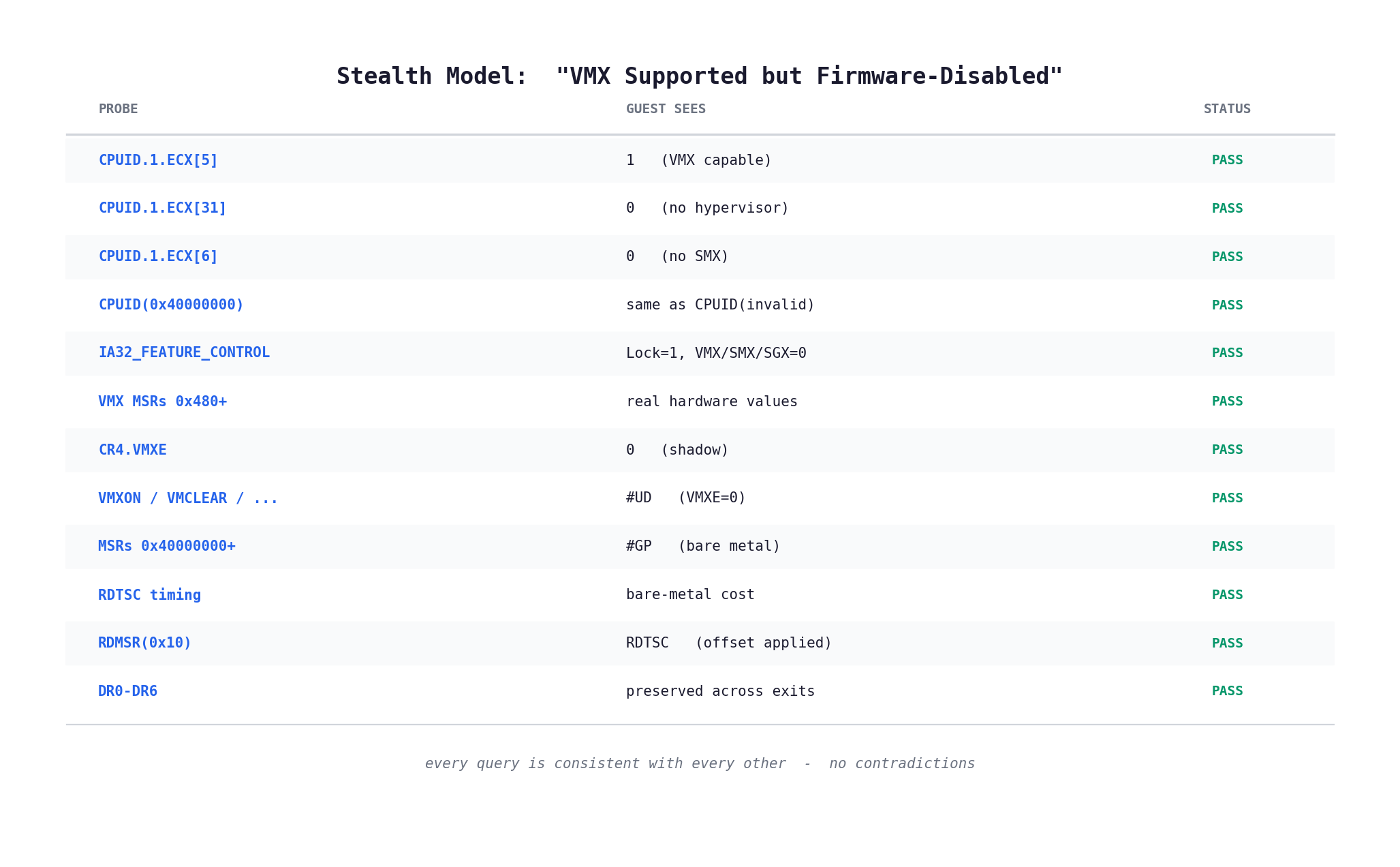

Ophion presents itself as: "CPU supports VMX but firmware locked it disabled." This is a real configuration that exists on corporate/locked-down machines. The guest sees:

| Query | Result | Reason |

|---|---|---|

CPUID.1.ECX[5] |

1 | Hardware capability bit, always 1 on VMX-capable CPUs |

CPUID.1.ECX[31] |

0 | No hypervisor present |

CPUID.1.ECX[6] |

0 | No SMX -- consistent with FEATURE_CONTROL |

IA32_FEATURE_CONTROL |

Lock=1, VMX/SMX/SGX=0 | Firmware locked everything off |

| VMX MSRs (0x480-0x493) | Real hardware values | These exist whenever ECX[5]=1 |

CR4.VMXE |

0 | VMX not active |

| VMX instructions | #UD |

Expected when VMXE=0 |

Every piece is consistent with every other piece. There's no single query that returns a contradictory answer.

VMAware, hvdetecc, and most anti-cheats compare CPUID responses across leaf ranges to find hypervisors. On bare metal, CPUID(0x40000000) returns the exact same values as CPUID(0x13371337) or CPUID(max_standard_leaf + 1) -- they're all out-of-range, and Intel CPUs return the last valid standard leaf's data for all of them. Most hypervisors respond to 0x40000000 with a hypervisor ID string (Hyper-V, KVM, etc.) or zeros. Comparing an obviously invalid leaf against 0x40000000 and seeing different responses is a dead giveaway.

Before VMXON, Ophion caches what the real CPU returns:

__cpuidex(cpu_info, 0x13371337, 0); // cache response for an obviously invalid leaf

g_stealth_cpuid_cache.invalid_leaf[0] = cpu_info[0];

g_stealth_cpuid_cache.invalid_leaf[1] = cpu_info[1];

g_stealth_cpuid_cache.invalid_leaf[2] = cpu_info[2];

g_stealth_cpuid_cache.invalid_leaf[3] = cpu_info[3];

During VM-exit CPUID handling, any leaf in an invalid range gets the cached response. The range check:

BOOLEAN stealth_is_leaf_invalid(UINT32 leaf)

{

if (leaf >= 0x40000000 && leaf <= 0x4FFFFFFF) // hypervisor reserved range

return TRUE;

if (leaf > max_std_leaf && leaf < 0x80000000) // gap between standard and extended

return TRUE;

if (leaf >= 0x80000000 && leaf > max_ext_leaf) // beyond extended range

return TRUE;

return FALSE;

}

The response is byte-identical to what the bare-metal CPU returns. There is no distinguishable difference.

Two bits are cleared from CPUID leaf 1 when stealth is active:

if (leaf == 1 && g_stealth_enabled)

{

cpu_info[2] &= ~((1 << 31) | (1 << 6));

}

- ECX[31] (hypervisor present) -- The primary detection bit used by every anti-cheat and VM-detection library. Windows itself checks this to decide whether to use Hyper-V enlightenments.

- ECX[6] (SMX/TXT) -- Cleared for consistency with

IA32_FEATURE_CONTROLwhich reports all SENTER/SMX enable bits as 0. Advertising SMX in CPUID while the feature control MSR says it's off is a detectable inconsistency.

ECX[5] (VMX) is intentionally left at 1. This is part of the stealth model -- the guest sees a CPU that supports VMX but has it disabled in firmware. Clearing ECX[5] would require a different consistency story where VMX capability MSRs should #GP on read.

When VMX is active, CR4.VMXE (bit 13) must be set -- anti-cheats read CR4 and check this bit. Ophion uses the CR4 guest/host mask to intercept accesses to bit 13. On a guest write to CR4, the handler forces VMXE=1 in the actual VMCS while the shadow reflects the guest's intent:

UINT64 actual = desired | CR4_VMX_ENABLE_FLAG;

// enforce VMX fixed bits on the actual value

fixed.Flags = __readmsr(IA32_VMX_CR4_FIXED0);

actual |= fixed.Fields.Low;

fixed.Flags = __readmsr(IA32_VMX_CR4_FIXED1);

actual &= fixed.Fields.Low;

__vmx_vmwrite(VMCS_GUEST_CR4, actual);

__vmx_vmwrite(VMCS_CTRL_CR4_READ_SHADOW, desired & ~CR4_VMX_ENABLE_FLAG);

CR0/CR4 writes also enforce VMX fixed bits (IA32_VMX_CRx_FIXED0/1) on the actual VMCS value. This prevents VM-entry failures from invalid CR states.

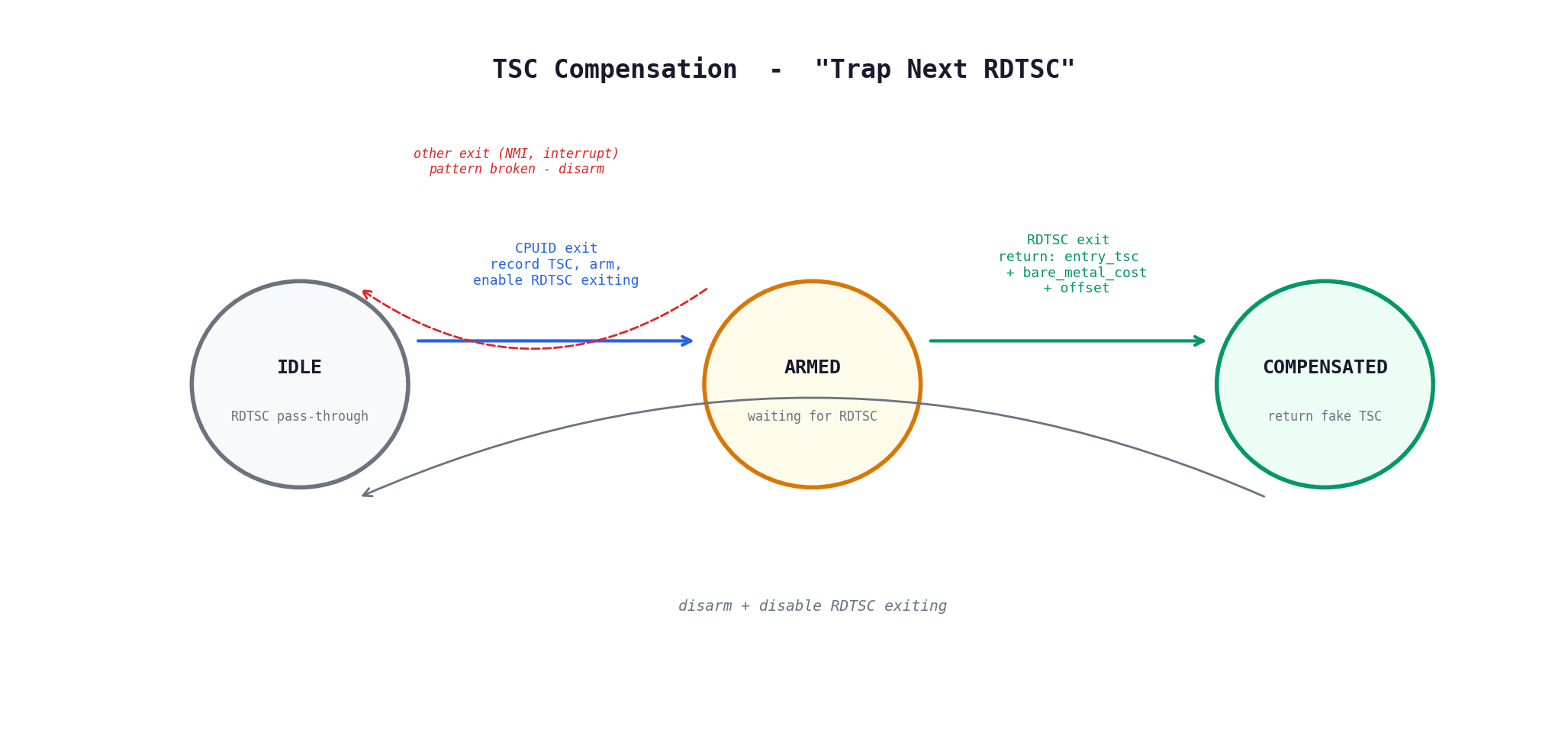

The classic hypervisor timing attack runs RDTSC -> CPUID -> RDTSC and checks the delta. On bare metal, CPUID takes roughly 80-200 cycles on P-cores (significantly more on efficiency cores -- ~350 cycles on Gracemont). Under a hypervisor, the VM-exit + handler + VM-entry adds 500-2000+ cycles. You can't just subtract a fixed offset from TSC_OFFSET because cumulative adjustments break TSC monotonicity and eventually cause watchdog timeouts and TDR.

Ophion uses a "trap next RDTSC" approach -- only intercept the one RDTSC that immediately follows a CPUID exit.

On CPUID VM-exit, arm the trap:

vcpu->tsc_cpuid_entry = exit_tsc_start; // TSC captured at very start of handler

vcpu->tsc_rdtsc_armed = TRUE;

// dynamically enable RDTSC exiting

size_t proc_ctrl = 0;

__vmx_vmread(VMCS_CTRL_PROCESSOR_BASED_VM_EXECUTION_CONTROLS, &proc_ctrl);

proc_ctrl |= (size_t)CPU_BASED_VM_EXEC_CTRL_RDTSC_EXITING;

__vmx_vmwrite(VMCS_CTRL_PROCESSOR_BASED_VM_EXECUTION_CONTROLS, proc_ctrl);

On the next RDTSC VM-exit, return a compensated value and disarm:

tsc = vcpu->tsc_cpuid_entry

+ g_stealth_cpuid_cache.bare_metal_cpuid_cost

+ (UINT64)(INT64)offset_raw;

vcpu->tsc_rdtsc_armed = FALSE;

// disable RDTSC exiting -- back to hardware pass-through

If any other exit fires between CPUID and RDTSC (external interrupt, NMI, etc.), the trap disarms. The attack pattern was broken.

bare_metal_cpuid_cost is calibrated before VMXON by taking the minimum of 200 fenced RDTSC -> CPUID -> RDTSC samples. This gives the true hardware cost without scheduling noise.

TSC_OFFSET is set to 0 and never modified. Only one RDTSC per CPUID exit is intercepted. Every other RDTSC in the system passes through at hardware speed via TSC offsetting. Zero drift, zero monotonicity issues, near-zero performance impact.

RDTSCP uses the same compensation path, with IA32_TSC_AUX passed through.

IA32_TIME_STAMP_COUNTER is intercepted via the MSR bitmap. When intercepted, the actual RDMSR never executes -- the VM-exit handler runs instead. Since __rdtsc() in VMX root returns the raw hardware TSC without the VMCS offset, the handler applies it manually:

case 0x10:

{

size_t tsc_offset_raw = 0;

__vmx_vmread(VMCS_CTRL_TSC_OFFSET, &tsc_offset_raw);

msr.Flags = (UINT64)((INT64)__rdtsc() + (INT64)tsc_offset_raw);

break;

}

Note that this interception is only necessary because the MSR bitmap traps it. Per the Intel SDM (Section 27.6.5), "Use TSC offsetting" applies the same offset to RDTSC, RDTSCP, and RDMSR reads of IA32_TIME_STAMP_COUNTER. Without the bitmap bit set, hardware handles offset application identically for all three. The interception exists so the TSC compensation path can cover RDMSR(0x10)-based timing attacks alongside RDTSC.

A running hypervisor needs EnableVmxOutsideSmx=1 in this MSR, which is readable from the guest. On bare metal without VMX active, this is typically Lock=1, everything else=0. The handler reads the real MSR and sanitizes it:

IA32_FEATURE_CONTROL_REGISTER feat = {0};

feat.AsUInt = __readmsr(IA32_FEATURE_CONTROL);

if (g_stealth_enabled)

{

feat.Lock = 1;

feat.EnableVmxInsideSmx = 0;

feat.EnableVmxOutsideSmx = 0;

feat.SenterLocalFunctionEnables = 0;

feat.SenterGlobalEnable = 0;

feat.SgxLaunchControlEnable = 0;

feat.SgxGlobalEnable = 0;

}

Writes inject #GP -- the MSR is locked.

IA32_VMX_BASIC, IA32_VMX_PINBASED_CTLS, IA32_VMX_PROCBASED_CTLS, IA32_VMX_EXIT_CTLS, IA32_VMX_ENTRY_CTLS, IA32_VMX_EPT_VPID_CAP, and others. Per the Intel SDM, these MSRs exist whenever CPUID.1.ECX[5]=1 (VMX supported), regardless of whether VMX is enabled in IA32_FEATURE_CONTROL. Since Ophion leaves ECX[5] intact (VMX supported but disabled), reads of these MSRs should return the real hardware values -- and they do. The read handler falls through to __readmsr(), passing the real capability data back to the guest.

Writes inject #GP:

if (target_msr == IA32_FEATURE_CONTROL ||

(target_msr >= IA32_VMX_BASIC && target_msr <= 0x493))

{

vmexit_inject_gp();

vcpu->advance_rip = FALSE;

return;

}

These MSRs are architecturally read-only. IA32_FEATURE_CONTROL is locked.

Hyper-V, KVM, and other hypervisors expose interface MSRs in the 0x40000000-0x4FFFFFFF range. On bare metal, these #GP. Ophion injects #GP for both reads and writes across this entire range.

DR0-DR3 and DR6 are not automatically saved/restored by the VMCS on VM-exit/entry (unlike DR7 which is). If the host clobbers these registers during VM-exit processing, a detection tool that sets DR0 to a known address and checks DR6 after a CPUID can spot the discrepancy. More specifically, if TF (trap flag) is set and DR0 is set to the address after CPUID, on bare metal DR6 should show BS (single-step) and B0 (breakpoint 0 matched). If the hypervisor doesn't properly maintain these, DR6 will be wrong.

Ophion shadows DR0-DR3 and DR6 in per-VCPU fields. On every VM-exit, save them from hardware before anything can clobber them. Before VMRESUME, restore them:

// top of vmexit_handler -- save guest state before anything can clobber it

vcpu->guest_dr0 = __readdr(0);

vcpu->guest_dr1 = __readdr(1);

vcpu->guest_dr2 = __readdr(2);

vcpu->guest_dr3 = __readdr(3);

vcpu->guest_dr6 = __readdr(6);

// ... handler dispatch, C code may clobber DRs ...

// before VMRESUME -- restore guest state

__writedr(0, vcpu->guest_dr0);

__writedr(1, vcpu->guest_dr1);

__writedr(2, vcpu->guest_dr2);

__writedr(3, vcpu->guest_dr3);

__writedr(6, vcpu->guest_dr6);

The MOV DR handler reads/writes the per-VCPU fields instead of hardware registers. DR7 goes through the VMCS (hardware save/restore). DR4/DR5 alias to DR6/DR7 when CR4.DE=0, and inject #UD when CR4.DE=1, per the Intel SDM.

When the hypervisor advances RIP after handling an instruction (e.g., CPUID), it must check if any hardware breakpoints match the new RIP. On bare metal, the CPU does this automatically. After a VM-exit, the CPU saves the single-step (BS) bit in the pending debug exceptions field but does not check DR0-DR3 -- we must do that manually:

for (int i = 0; i < 4; i++)

{

if (!(dr7 & (ln_bits[i] | gn_bits[i]))) // not enabled

continue;

if ((dr7 & DR7_RW_MASK(i)) != 0) // not an execution BP (R/W must be 00)

continue;

UINT64 drn;

switch (i)

{

case 0: drn = vcpu->guest_dr0; break;

case 1: drn = vcpu->guest_dr1; break;

case 2: drn = vcpu->guest_dr2; break;

case 3: drn = vcpu->guest_dr3; break;

}

if (drn == new_rip)

bp_matched |= bn_bits[i];

}

if (bp_matched)

{

pending |= bp_matched | PENDING_DEBUG_ENABLED_BP;

__vmx_vmwrite(VMCS_GUEST_PENDING_DEBUG_EXCEPTIONS, pending);

}

Without this, hardware breakpoints on the instruction after a VM-exit-causing instruction would silently fail to fire.

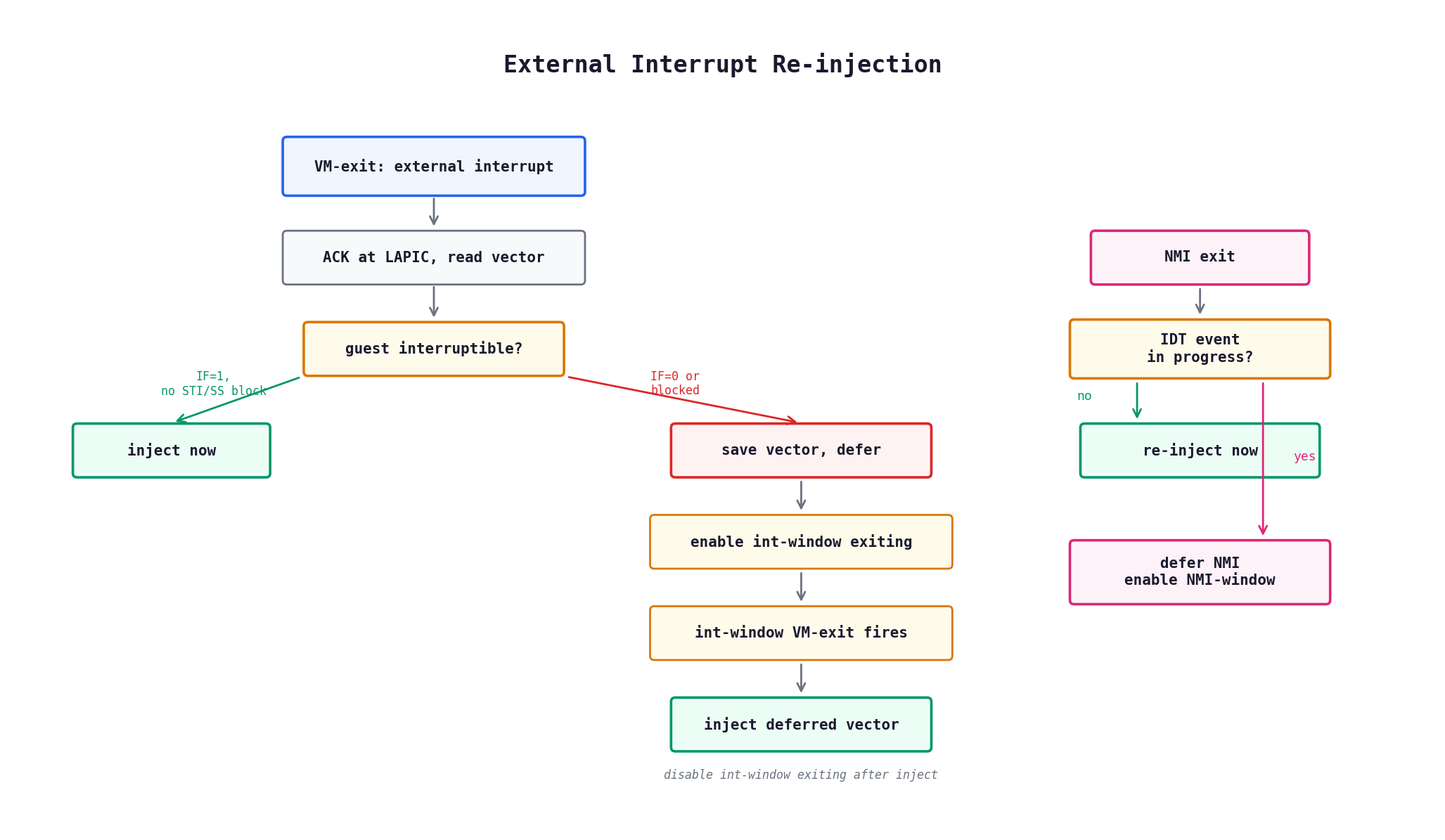

Getting interrupts right is non-negotiable. Broken interrupt handling means TDR, BSOD, or subtle corruption.

With ACK_INTERRUPT_ON_EXIT, the CPU acknowledges the interrupt at the LAPIC on VM-exit and stores the vector. The handler checks if the guest can take it:

BOOLEAN guest_interruptible =

(rflags_raw & (1ULL << 9)) && // RFLAGS.IF=1

!(intr_state & (GUEST_INTR_STATE_BLOCKING_BY_STI |

GUEST_INTR_STATE_BLOCKING_BY_MOV_SS));

if (guest_interruptible)

{

vmexit_inject_interrupt(vector);

}

else

{

// defer: save the vector, enable interrupt-window exiting

vcpu->pending_ext_vector = (UINT8)vector;

vcpu->has_pending_ext_interrupt = TRUE;

proc_ctrl |= (size_t)CPU_BASED_VM_EXEC_CTRL_INTERRUPT_WINDOW_EXITING;

__vmx_vmwrite(VMCS_CTRL_PROCESSOR_BASED_VM_EXECUTION_CONTROLS, proc_ctrl);

}

On the next interrupt-window VM-exit (fires when the guest becomes interruptible), the deferred vector is injected and window exiting is disabled.

With virtual NMIs enabled, an NMI VM-exit (exit reason 0: exception or NMI) sets blocking-by-NMI in the guest interruptibility state. The handler can't blindly re-inject -- VM-entry requires blocking-by-NMI = 0 when injecting type NMI (SDM 26.3.1.1). Since the NMI was intercepted before guest delivery, the handler clears blocking-by-NMI before reinjecting. This is safe: VM-entry re-sets blocking when it delivers the injected NMI (SDM 26.6.1.2), and the guest's IRET clears it naturally.

When an NMI VM-exit interrupts IDT delivery of another event (detected via VMCS_IDT_VECTORING_INFORMATION), the NMI can't be injected immediately -- the IDT event has priority. The NMI is deferred: saved to has_pending_nmi and NMI-window exiting is enabled. The NMI-window exit fires naturally after the guest executes IRET (which clears virtual NMI blocking), and the deferred NMI is injected then.

If a VM-exit occurs while the CPU is mid-delivery of an exception or interrupt through the IDT, VMCS_IDT_VECTORING_INFORMATION records the interrupted event. Ophion re-injects it on the next VM-entry with priority over anything the handler might have queued. Software exceptions and interrupts get their instruction length forwarded. Error code events get their error code forwarded.

When the VM-exit reason is an exception that occurred during IDT delivery, exception combining applies (SDM Vol 3 Table 6-5). Certain combinations produce a double fault or triple fault instead of serial delivery:

- Contributory + contributory -> #DF (contributory = #DE, #TS, #NP, #SS, #GP)

- #PF + contributory or #PF + #PF -> #DF

- #DF + any exception -> shutdown (triple fault)

- Benign combinations -> reinject the IDT event; the exit exception regenerates during delivery

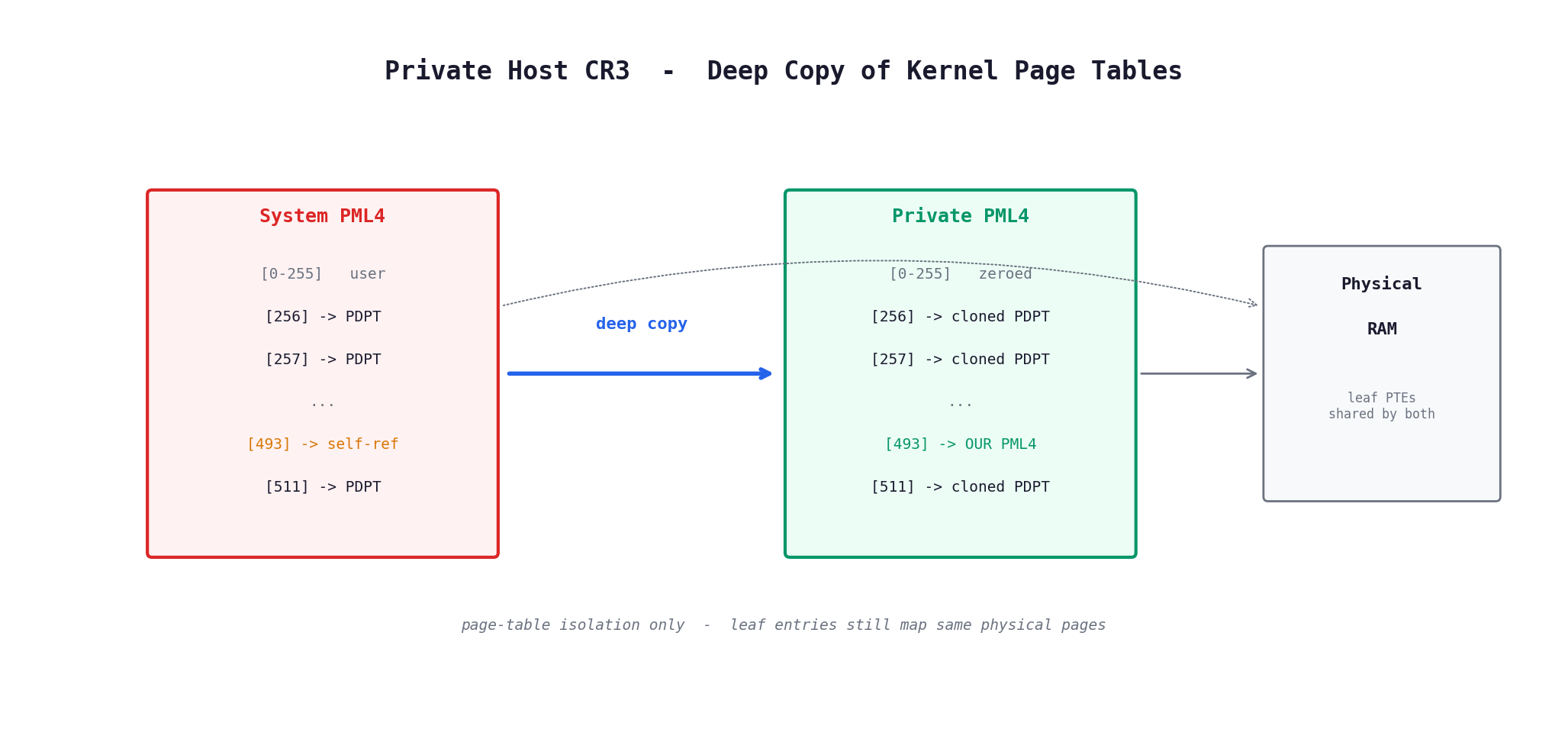

The problem: HOST_CR3 in the VMCS is the page table used during VM-exit processing. If it points to the system process page tables, guest-mode code (including anti-cheat drivers) can corrupt kernel PTEs and cause the hypervisor to fault in host mode.

The solution: hostcr3_build() deep-copies the kernel portion of the system process page tables:

- Read the system process PML4 (from

PsInitialSystemProcess->DirectoryTableBase) - For each present kernel PML4 entry (indices 256-511): recursively clone PDPT -> PD -> PT

- Large pages (1GB, 2MB) are copied as-is -- leaf entries still point to the same physical RAM

- Fix up the self-referencing PML4 entry to point to the private PML4

- Zero user-space entries (0-255) -- host mode never runs user code

The self-referencing fixup is the subtle part -- Windows uses one PML4 entry that points back to itself for page table self-mapping. Without updating it, the private page tables would still reference the original PML4:

for (UINT32 i = 256; i < 512; i++)

{

if ((our_pml4[i] & PTE_PRESENT) &&

((our_pml4[i] & PTE_PFN_MASK) == pml4_pa)) // points to original PML4

{

our_pml4[i] = (our_pml4[i] & ~PTE_PFN_MASK) | g_host_pml4_pa;

break;

}

}

Physical page table pages are mapped via MmGetVirtualForPhysical() instead of MmMapIoSpace. The former works for all RAM-backed PFNs and doesn't need unmapping. The latter fails in some virtualized environments.

The snapshot is static -- it doesn't track dynamic kernel PTE changes after load. This is fine because all host-mode allocations (VMM stacks, bitmaps, etc.) are done before the snapshot is taken.

The VMCALL interface uses a 3-register signature:

if (regs->r10 != 0x48564653ULL || // 'HVFS'

regs->r11 != 0x564d43414c4cULL || // 'VMCALL'

regs->r12 != 0x4e4f485950455256ULL) // 'NOHYPERV'

{

vmexit_inject_ud();

vcpu->advance_rip = FALSE;

return;

}

Two checks before dispatch:

- CPL check: Read guest CS access rights from VMCS. DPL must be 0 (ring 0 only). User-mode VMCALL gets

#UD. - Signature check: All three signature registers must match exactly. Any mismatch gets

#UD.

All other VMX instructions (VMCLEAR, VMPTRLD, VMREAD, VMWRITE, VMXON, VMXOFF, VMLAUNCH, VMRESUME, INVEPT, INVVPID, GETSEC) also inject #UD. This is consistent with the guest seeing CR4.VMXE=0 -- on bare metal, executing VMX instructions with VMXE not set causes #UD.

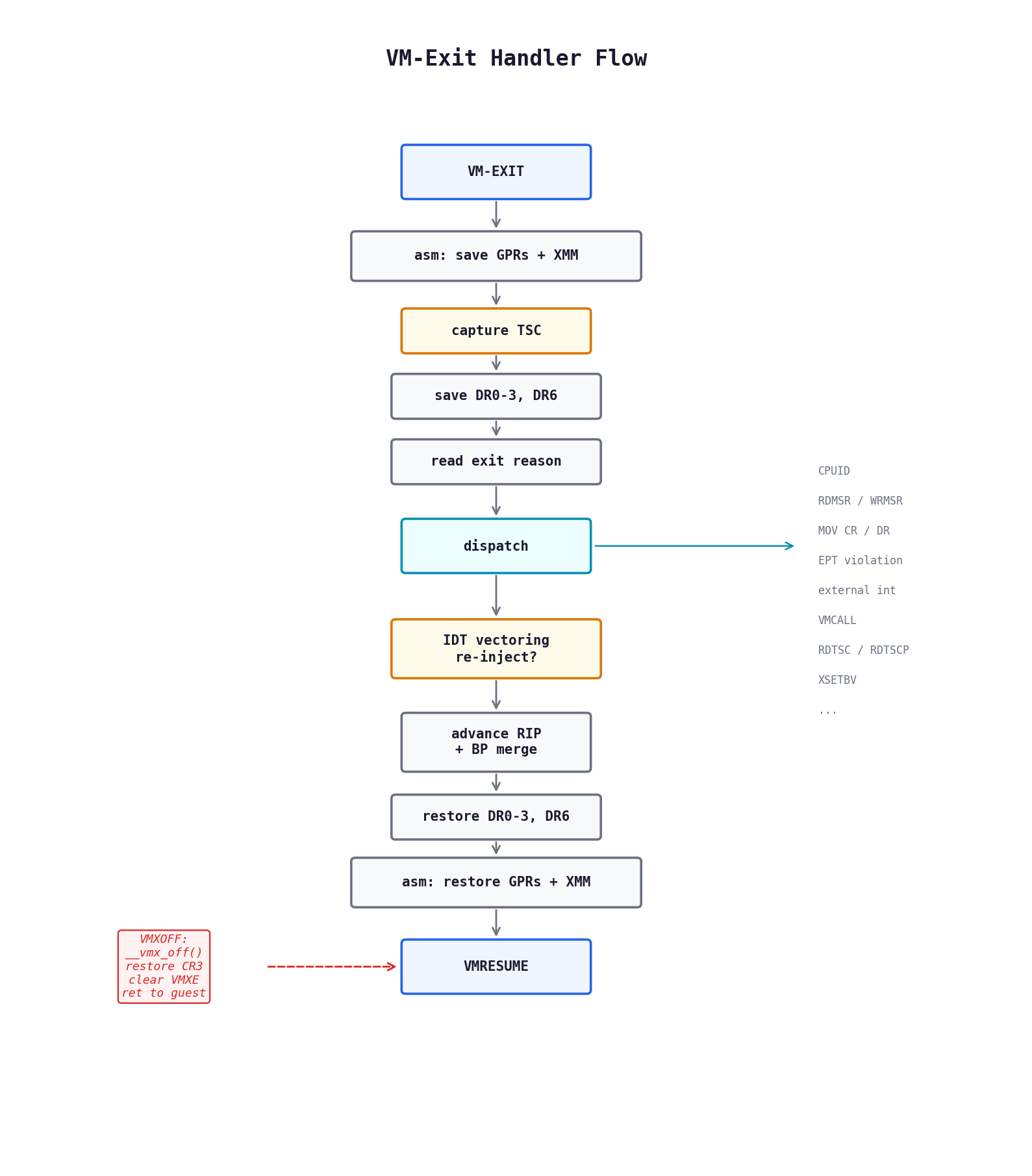

asm_vmexit_handler is HOST_RIP. On every VM-exit the CPU jumps here. The stack layout after all saves:

; [rsp + 0x000] rax (GUEST_REGS starts here)

; [rsp + 0x008] rcx

; ...

; [rsp + 0x078] r15

; [rsp + 0x080] xmm0..xmm5 + mxcsr (0x110 bytes)

; [rsp + 0x190] rflags

; [rsp + 0x198] padding

; [rsp + 0x1A0] <-- original HOST_RSP

; [rsp + 0x1A8] vcpu pointer (stored at HOST_RSP - 8 by vmx_setup_vmcs)

The assembly saves XMM0-XMM5 + MXCSR (volatile under x64 ABI -- C code can clobber these), pushes all 16 GPRs as the GUEST_REGS struct, then calls the C handler:

mov rcx, rsp ; arg1: PGUEST_REGS

mov rdx, [rsp + 01A8h] ; arg2: vcpu pointer (from vmm stack)

call vmexit_handler

On FALSE return: restore GPRs, XMM, RFLAGS, jump to vmx_vmresume. On TRUE return (vmxoff): restore GPRs, retrieve guest RSP/RIP from VCPU, set RSP, push RIP, ret to guest code.

The C handler reads exit reason, qualification, guest RIP/RSP, saves debug registers, then dispatches to the appropriate sub-handler. After dispatch:

- Check IDT vectoring and re-inject if needed (priority over handler injection)

- Advance RIP if appropriate, with hardware BP merge into pending debug exceptions

- Restore guest DR0-DR3/DR6 to hardware

- Return FALSE for VMRESUME, TRUE for VMXOFF

XSETBV exits are validated per Intel SDM Vol. 1 Section 13.3 using the hardware XCR0 capability mask from CPUID.0Dh.0 (cached at init). Instead of hardcoding which XCR0 bits are valid, the mask is read from the CPU at init, which automatically supports PKRU, AMX, and future extensions.

Validation rules (all inject #GP on violation):

- ECX upper 32 bits must be 0

- Only XCR0 (index 0) is valid

- Bit 0 (x87) must always be set

- AVX (bit 2) requires SSE (bit 1)

- MPX bits (3-4) must be both set or both clear

- AVX-512 bits (5-7) must all be set together, with AVX+SSE

- AMX bits (17-18) must be both set or both clear

- No bits beyond hardware capability

The primary processor controls only request TSC offsetting, MSR bitmaps, I/O bitmaps, and secondary controls. Everything else in this section is handled because must-be-1 bits on some CPUs can force the exit, and an unhandled exit would crash the guest.

- MOV to CR3 -- Strips PCID no-invalidate bit (bit 63) before writing

VMCS_GUEST_CR3(VM-entry rejects CR3 with bit 63 set). Issues INVVPID for TLB coherency unless no-invalidate was set. - CLTS -- Clears CR0.TS (bit 3) in both actual VMCS CR0 and the shadow, re-enforcing VMX fixed bits on the actual value.

- LMSW -- Loads bits 0-3 of CR0 from the source operand. PE (bit 0) can be set but never cleared by LMSW (Intel SDM). VMX fixed bits re-enforced.

- MOV to/from CR8 -- Pass-through of the TPR register, masked to bits [3:0].

- INVD -- Converted to

WBINVD. INVD discards dirty cache lines without writing back -- using it on a running system would corrupt memory. - INVLPG -- Issues INVVPID (individual address or all-contexts fallback) for TLB coherency.

- RDPMC -- Passed through for valid counters. Invalid counters get

#GP. - HLT -- Sets guest activity state to HLT. The CPU will VM-exit on the next interrupt.

- WBINVD/MWAIT/MONITOR/PAUSE -- No-op, just advance RIP.

- GETSEC --

#UD(SMX is hidden).

All stealth features are compile-time toggles in stealth.h gated by the runtime g_stealth_enabled flag:

| Toggle | Default | Description |

|---|---|---|

STEALTH_ENABLED |

1 | Master switch |

STEALTH_HIDE_CR4_VMXE |

1 | Hide CR4.VMXE via shadow |

STEALTH_COMPENSATE_TIMING |

0 | TSC compensation (trap next RDTSC) |

STEALTH_CPUID_CACHING |

1 | Cache native CPUID for invalid leaves |

USE_PRIVATE_HOST_CR3 |

1 | Isolated host page tables |

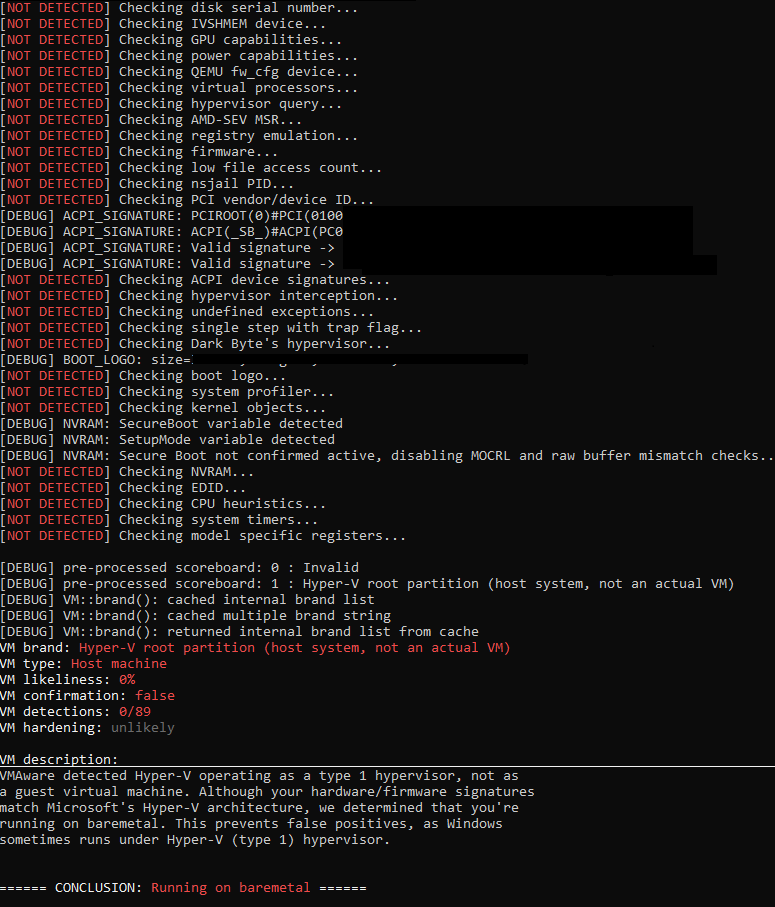

Passes with all stealth toggles enabled:

- hvdetecc -- CR4.VMXE shadow, MSR 0x10 interception, CPUID timing

- VMAware -- DR trap (DR0 + TF on CPUID, DR6 BS+B0), CPUID leaf checks

- checkhv_um -- RDTSC+CPUID+RDTSC timing, CPUID leaf enumeration, brand string

Tested on Intel Core i5-14400F (14th Gen), Windows 10 x64.

- CR4.VMXE write-then-read: On bare metal, writing CR4 with VMXE=1 and reading back shows VMXE=1. Under Ophion, the shadow always strips VMXE, so reading back shows VMXE=0. A detection tool that writes VMXE and checks if it stuck would see a discrepancy. This is the intentional cost of VMXE hiding -- the alternative (not hiding VMXE) is a more common and easier detection vector.

- Performance counters: RDPMC is passed through. A timing attack using

IA32_MPERF/IA32_APERFor PMC-based instruction counting could detect VM-exit overhead. Would require MSR interception and offset compensation. - Wall-clock timing: HPET and LAPIC timer current-count registers are memory-mapped (MMIO) and not intercepted by the current EPT configuration. An attack reading HPET alongside RDTSC could detect discrepancies. Would require EPT hooks on the HPET MMIO page.

- Host CR3 staleness: The private host page tables are a static snapshot. Dynamic kernel PTE changes after load aren't reflected. Fine for the hypervisor's own allocations (mapped before snapshot) but means host mode uses slightly stale kernel mappings.

- No EPT hooks: The 2MB-to-4KB splitting infrastructure is implemented but no hooks are installed. EPT-based hooking (execute-only pages, split TLB) is the logical next step.

Requires Visual Studio 2022, WDK 10.0.26100.0, MSVC with MASM (x64).

MSBuild.exe Ophion.sln /p:Configuration=Release /p:Platform=x64

Output: build\bin\Release\Ophion.sys (test-signed).

bcdedit /set testsigning on

sc create Ophion type= kernel binPath= "C:\path\to\Ophion.sys"

sc start Ophion

sc stop Ophion

sc delete Ophion